Typically CodeBuild is used as part of your CI/CD pipeline, perhaps along with other AWS tools like CodeCommit, CodePipeline and CodeDeploy.

This blog will explore the use of CodeBuild to build the Bedrock project and update a yum repository. Along the way I'll detail some of the things I've learned and the path I took to automating the Bedrock build.

The Bedrock build system has been (up until now) a manual process that involved these steps.

In an EC2 based development environment...

- Check-in all of the code to the git repository.

- Run a configure script in the project root

- Run make dist to create a distribution tarball

- Run rpmbuild

- Create a local yum repository

- Sync the local yum repository to an S3 bucket setup as a website

I decided to try to capitalize on Amazon's CI/CD toolchain, specifically CodeBuild to automate the build process for Bedrock. First step was to make sure that the build can be done in an environment other than my development environment. CodeBuild essentially runs your build in a Docker container using either an image you supply or an image from their list of images, so you'll want to make sure you understand and account for all of your build's dependencies. Here's the link to the images you can choose from.

http://docs.aws.amazon.com/codebuild/latest/userguide/build-env-ref.html#build-env-ref-available

|

| Specify the Docker image id to use for the build |

You have the choice of selecting a Docker image from your AWS container registry (ECR), one of Amazon's managed images referenced above, or an Ubuntu image with build environments for popular languages like Java, Node.js, Golang or Python. If you want to use one of the images in the link above, select "Specify a Docker image" and then select "Other" in the field labeled "Custom image type". You can then cut and paste the image specification from the list and paste that into the "Custom image ID" field.

I first successfully created my own Docker image and uploaded that to ECR. After learning a little more about how CodeBuild works and the fact the you can tell CodeBuild to install required dependencies pretty easily, I tried one of the Linux AMIs which turned out to work just as well and eliminated the need for me to manage my own Docker image.

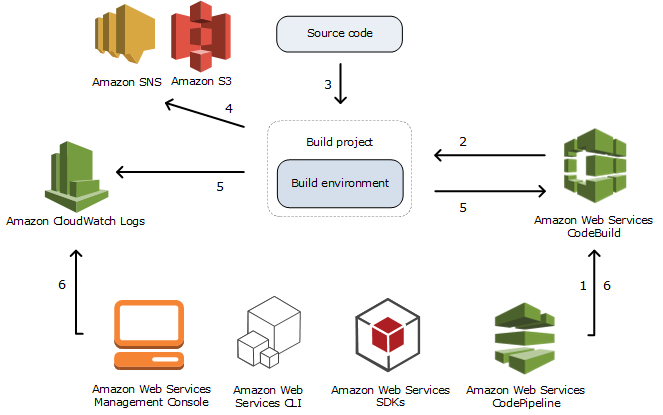

So, as the picture at the top suggests, you kick off your CodeBuild project either from the AWS console, the AWS CLI, an AWS SDK or as part of a CodePipeline project (you can also use the API directly by sending an HTTP request). Using CodePipeline, events like a commit to your Git or CodeCommit repository automatically trigger a build of your CodeBuild project. To get started, I kicked of my builds in the console.

When you start a build project in the console you specify several required parameters in the GUI.

Create a name for the project and then tell CodeBuild what to build by specifying a source from which to build. You can specify either:

- A Github repository

- CodeCommit

- An S3 bucket and key (a zip file)

| version: 0.1 | |

| environment_variables: | |

| plaintext: | |

| PATH: "/usr/local/bin:/usr/bin:/bin:/usr/sbin:/sbin" | |

| phases: | |

| install: | |

| commands: | |

| - yum update -y | |

| - yum install -y util-linux rpm-build rpm-sign wget expect aws-cli createrepo | |

| pre_build: | |

| commands: | |

| - aws s3 cp s3://openbedrock/gnupg.tar.gz /root/ | |

| - cd /root && tar xfvz gnupg.tar.gz | |

| - mkdir /root/rpmbuild | |

| - echo -e "%_topdir /root/rpmbuild\n%_gpg_name OpenBedrock" > /root/.rpmmacros | |

| build: | |

| commands: | |

| - cd $CODEBUILD_SRC_DIR && ./build build -r -x | |

| - cd $CODEBUILD_SRC_DIR && ./build deploy |

The build file allows you to setup environment variables and initialize the container by executing commands in each of two phases run prior to the build. In the "install" phase you should install any dependencies required for your build. Each command is run in a separate shell. From the docs, "AWS CodeBuild runs each command, one at a time, in the order listed, from beginning to end."

The pre_build section runs commands that prep your environment or setup other build dependencies. In my case, I download my signing keys and prepared the RPM build environment.

You specify the build command necessary to create the build artifacts in the build phase. My project includes a build script that does the build as I discussed earlier. After the build is complete I run my build script with a "deploy" option that syncs an S3 bucket I use as a yum repository. Here's a blog I wrote regarding how to setup an S3 bucket as a yum repo.

CodeBuild is not particularly good at reporting errors in general and in terms of your YAML file, make sure it's valid. Even if it is valid, if you mess up the semantics (like use '"pre-build" instead of "pre_build" as I did) you may find CodeBuild failing silently.

Now provide the environment to use. As discussed above that can be a Docker image managed by AWS, your own Docker image or an Ubuntu pre-cooked environment specific to some language.

If your project specifies artifacts in the build spec file under the "artifacts" section, then add an S3 bucket and folder name where those artifacts are to be stored.

You can add an "artifact" section in your build spec file that tells CodeBuild where in your container the build artifacts can be found. You tell CodeBuild what to do with those artifacts when you setup the project in the GUI. The two choices are "No artifacts" or S3. If you choose S3 CodeBuild will ask you for a folder name. It turns out you can't install the artifacts to the root of the S3 bucket so I was unable to use that method to update my S3 bucket. Instead the "deploy" option of my build script just does a sync to my S3 bucket after I create the yum repo locally.

You can add an "artifact" section in your build spec file that tells CodeBuild where in your container the build artifacts can be found. You tell CodeBuild what to do with those artifacts when you setup the project in the GUI. The two choices are "No artifacts" or S3. If you choose S3 CodeBuild will ask you for a folder name. It turns out you can't install the artifacts to the root of the S3 bucket so I was unable to use that method to update my S3 bucket. Instead the "deploy" option of my build script just does a sync to my S3 bucket after I create the yum repo locally.The build project assumes an IAM role, so you'll need to either create one and modify it (recommended) or create one from scratch and provide it in the Service role section.

Permissions

There are a couple of things to keep in mind regarding permissions. If you are kicking off your build with an account other than your root account (and you should be!), you're going to need permissions to run CodeBuild. Here's a policy I attached to a "developer" role.

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "Enable_CodeBuild",

"Effect": "Allow",

"Action": [

"codebuild:*"

],

"Resource": "*"

}

]

}

I probably should be more restrictive and only allow StartBuild and StopBuild or I could have used the AWSCodeBuildDeveloperAccess or AWSCodeBuildReadOnlyAccess managed policies provided by AWS.

You're also going to need to make sure that the CodeBuild policy that is created when you set up the build project includes the necessary permissions to write to your S3 bucket if you're going to be writing to an S3 bucket. One thing that tripped me up was the fact that I was granting permissions during my bucket sync.

# sync local repo with S3 bucket, make it PUBLIC

PERMISSION="--grants read=uri=http://acs.amazonaws.com/groups/global/AllUsers"

aws s3 sync --include="*" ${repo} s3://$REPO_BUCKET/ $PERMISSION

|

| Umm...no the OTHER red herring |

Kicking Off the Build

As noted above, you can kick of the build in several ways.

- Setup a CodePipeline project and trigger the build when a Github commit is done

- Use the AWS CLI

- Use the AWS SDK

- Use the AWS console

- Send an API request

Here's the CLI command:

aws codebuild start-build --region=us-east-1 --project-name="bedrock-build"

Since Perl is my language of choice, using the AWS SDK was not an option, so I was able to hack together a little Perl script for sending the API request via HTTP.

#!/usr/bin/perl

# execute a build usine AWS CodeBuild

use strict;

use warnings;

use AWS::Signature4;

use Data::Dumper;

use HTTP::Request;

use JSON;

use LWP::UserAgent;

my $signer = AWS::Signature4->new(-access_key => $ENV{AWS_ACCESS_KEY_ID},

-secret_key => $ENV{AWS_SECRET_ACCESS_KEY});

my $ua = LWP::UserAgent->new();

# Example POST request

my $request = HTTP::Request->new(POST => 'https://codebuild.us-east-1.amazonaws.com');

$request->content('{"projectName" : "bedrock-build"}');

$request->header('Content-Type' => 'application/x-amz-json-1.1');

$request->header('X-Amz-Target' => 'CodeBuild_20161006.StartBuild');

$signer->sign($request);

my $response = $ua->request($request);

if ($response->is_success) {

my $rsp = $response->decoded_content( raise_error => 1 );

print Dumper from_json($rsp);

}

else {

print STDERR sprintf("error (%s) submitting request for build: (%s)\n", $response->status, $response->decode_content);

exit -1;

}

Conclusion

CodeBuild is cool. Try it. It's cheap too. At $.005/build minute a typical build will cost you only a few pennies. Depending on how often you need to do a full build and the complexity you may find CodeBuild to be a perfect addition to your toolbox.

Up Next

Automating the build using CodePipeline...

No comments:

Post a Comment

Note: Only a member of this blog may post a comment.